Dutch certificate authority DigiNotar revealed that the fake Google certificates signed by them were due to an intrusion into their system. The didn't give any details on how this was done.

I would assume the attacker didn't physically enter DigiNotar's facilities but instead accessed their network through the Internet. If so, this is yet another case of a security system being breached because the owner did not keep highly sensitive assets properly segregated from computers with access to the open internet. RSA are not alone.

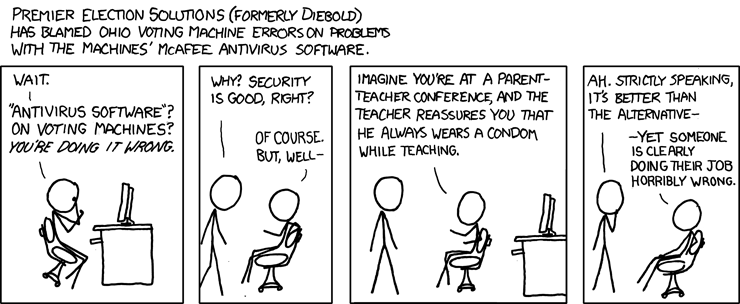

Or as Randall Munroe of XKCD puts it:

Wednesday, August 31, 2011

Tuesday, August 30, 2011

Security paradigm shift: Glitching the XBOX 360

In the mid-90s a new kind of attack hit the smart card industry - the glitch attack. Marcus Kuhn and Ross Anderson described this attack in a paper from 1996:

Yesterday a hacker named gligli announced that he performed such a glitch attack on the Xbox 360, the details of which he described in a Free60.org wiki:

One of the reasons CE devices have been considered less susceptible to glitch attacks is that they run at a much higher clock frequency (many smart cards run at under 5 MHz while modern CE devices run at over 200 MHz). The Xbox 360 helpfully has a signal that allows hackers to reduce the clock frequency to 520 KHz - making glitch attacks much easier. If the Xbox security team had more smart card design experience they probably wouldn't have made such a mistake.

Power and clock transients can also be used in some processors to affect the decoding and execution of individual instructions. Every transistor and its connection paths act like an RC element with a characteristic time delay; the maximum usable clock frequency of a processor is determined by the maximum delay among its elements. Similarly, every flip-flop has a characteristic time window (of a few picoseconds) during which it samples its input voltage and changes its output accordingly. This window can be anywhere inside the specified setup cycle of the flip-flop, but is quite fixed for an individual device at a given voltage and temperature.

So if we apply a clock glitch (a clock pulse much shorter than normal) or a power glitch (a rapid transient in supply voltage), this will affect only some transistors in the chip. By varying the parameters, the CPU can be made to execute a number of completely different wrong instructions, sometimes including instructions that are not even supported by the microcode. Although we do not know in advance which glitch will cause which wrong instruction in which chip, it can be fairly simple to conduct a systematic search.Glitch attacks undermine what is perhaps the most common and fundamental assumption (law #1) of secure computing - that software will always run as it was coded. The breaking of this paradigm created a situation in the late 90s in which pretty much every smart card in the world could be hacked at a low cost. It took the smart card industry several years to design and deploy new hardware that was resistant to this kind of attack (solutions include various forms of input regulators, filters and detectors). Attempts to prevent this form of attack using special secure coding techniques proved ineffective - you can't really solve a security hole using software if you can't rely on the software running as coded.

Yesterday a hacker named gligli announced that he performed such a glitch attack on the Xbox 360, the details of which he described in a Free60.org wiki:

We found that by sending a tiny reset pulse to the processor while it is slowed down does not reset it but instead changes the way the code runs, it seems it's very efficient at making bootloaders memcmp functions always return "no differences". memcmp is often used to check the next bootloader SHA hash against a stored one, allowing it to run if they are the same. So we can put a bootloader that would fail hash check in NAND, glitch the previous one and that bootloader will run, allowing almost any code to run.As far as I know this is the first successful glitch attack performed on a consumer electronics device; first but not last. Consumer electronics (CE) device security engineers have never made the paradigm shift to a world with glitch attacks. It is quite likely that a similar glitch attack can be done on other CE devices be they other game consoles, cell phones or tablets. This is really big news and it's surprising that it hasn't made more waves in the security blog-sphere.

One of the reasons CE devices have been considered less susceptible to glitch attacks is that they run at a much higher clock frequency (many smart cards run at under 5 MHz while modern CE devices run at over 200 MHz). The Xbox 360 helpfully has a signal that allows hackers to reduce the clock frequency to 520 KHz - making glitch attacks much easier. If the Xbox security team had more smart card design experience they probably wouldn't have made such a mistake.

Monday, August 29, 2011

Who owns your identity? The UDID in iOS 5

As you may have read, Apple has removed from iOS 5 the API that allows applications to access the UDID (Unique Device IDentifier). The UDID is a non-modifiable unique ID given to each iOS device and is used by many applications to identify a specific device.

Why is Apple preventing application developers from accessing the UDID? Two possible security reasons for this are to protect user privacy and to protect the secrecy of the UDID.

User privacy: Though the UDID identifies the device and not the user, many applications have access to both the device UDID and the user's identity. Such applications could be used to create a mapping of users to UDID which could then be used to identify users using applications that don't have access to the User's ID. For example, let's say a user has both a Facebook app and a porn app on their iOS device. Assuming the porn app doesn't require the user to enter any personal identifier, the user can assume that the distributors of the porn app don't know his identity. But since the Facebook app has access to both the UDID and the user's Facebook account, it is possible (for Facebook) to generate a mapping of UDIDs to Facebook accounts and this could subsequently be used by the porn app distributor to identify users of the porn app.

UDID secrecy: There is reason to believe that some Apple applications use the UDID as a secret value. Apple cannot rely on other applications with access to the UDID to keep this value a secret.

A conspiracy theorist might think that in fact the reason Apple is doing this is to keep the UDID to itself. As TechCrunch notes:

I'm sure the security engineers at Apple are smart enough to have thought of this. The fact that they didn't do so but simply removed the UDID API tells me that their goal was not to enhance security but to gain exclusivity on the device identity. Having such exclusive ownership of devices' identity gives Apple a great advantage in the development of identity-dependent applications including DRM.

Sometimes security is just an excuse for increasing control.

Why is Apple preventing application developers from accessing the UDID? Two possible security reasons for this are to protect user privacy and to protect the secrecy of the UDID.

User privacy: Though the UDID identifies the device and not the user, many applications have access to both the device UDID and the user's identity. Such applications could be used to create a mapping of users to UDID which could then be used to identify users using applications that don't have access to the User's ID. For example, let's say a user has both a Facebook app and a porn app on their iOS device. Assuming the porn app doesn't require the user to enter any personal identifier, the user can assume that the distributors of the porn app don't know his identity. But since the Facebook app has access to both the UDID and the user's Facebook account, it is possible (for Facebook) to generate a mapping of UDIDs to Facebook accounts and this could subsequently be used by the porn app distributor to identify users of the porn app.

UDID secrecy: There is reason to believe that some Apple applications use the UDID as a secret value. Apple cannot rely on other applications with access to the UDID to keep this value a secret.

A conspiracy theorist might think that in fact the reason Apple is doing this is to keep the UDID to itself. As TechCrunch notes:

If Apple does continue to use UDID for itself but denies it to developers that would be an “extremely lopsided change.” It would give Game Center and iAds yet one more advantage over competing third-party services.In this case I'm voting with the conspiracists. If Apple wanted to protect the secrecy of the UDID or to protect user privacy they could have easily replaced the current UDID API with a new API that would still give the application developers a reliable unique identifier of the device but wouldn't compromise the secrecy of the UDID nor the privacy of iOS users. Specifically, Apple could have done this by replacing the UDID API with an API that returns a one-way function hash value of the UDID which is keyed using an application specific identifier (e.g. the App ID).

I'm sure the security engineers at Apple are smart enough to have thought of this. The fact that they didn't do so but simply removed the UDID API tells me that their goal was not to enhance security but to gain exclusivity on the device identity. Having such exclusive ownership of devices' identity gives Apple a great advantage in the development of identity-dependent applications including DRM.

Sometimes security is just an excuse for increasing control.

Thursday, August 25, 2011

CAPTCHAs and the Robot in the Middle attack

A CAPTCHA is a visual test of humanity used to prevent machines from performing an operation that is intended to be performed only by people. Many internet services use this to prevent mass automatic access to their services. For example, Google requires anyone registering a Gmail account to pass a CAPTCHA test.

The most commonly used CAPTCHA is a request to identify letters that are presented on the screen in a form which is difficult for OCR software to identify - see example on the right.

One way to circumvent CAPTCHAs is to use a Robot-in-the-Middle attack.

The most commonly used CAPTCHA is a request to identify letters that are presented on the screen in a form which is difficult for OCR software to identify - see example on the right.

One way to circumvent CAPTCHAs is to use a Robot-in-the-Middle attack.

Thursday, August 18, 2011

Two bit attack reduces security effectiveness of AES by 70%!

Now how's that for a sensational headlline? And it's true. A paper released today presents an attack to reduce the computational complexity of brute forcing an AES-128 key to 2 by the power of 126.1 - which means such an attack would take only 30% of the time it would take to do the full 2 by the power of 128 exhaustive search. Similar reductions of about 2 bits are presented for AES-192 and AES-256.

Of course this attack doesn't have any practical impact - such an attack is still completely infeasible - but (as The H writes) it's a first dent in the full AES in other ten years of intensive crytanlysis.

In American slang "two bit" means insignificant - so I guess one could call this a two-bit attack.

Of course this attack doesn't have any practical impact - such an attack is still completely infeasible - but (as The H writes) it's a first dent in the full AES in other ten years of intensive crytanlysis.

In American slang "two bit" means insignificant - so I guess one could call this a two-bit attack.

GPRS hacked: Who cares?

In case you weren't paying attention last week - Karsten Nohl and friends cracked the GPRS encryption scheme.

In this Forbes interview with Karsten the interviewer tried to get an answer on why the encryption scheme for GPRS was made weaker than that of the earlier GSM voice encryption scheme (A5/1 - which demanded much more effort to crack).

One point I didn't see mentioned is that fact that data communicated over GPRS can easily be encrypted at the application level, while voice is generally only secured at the GSM level. No serious security engineer would rely on the unkown propriety GPRS encryption for securing sensitive data communications over GPRS when they can always add there own application level encryption. If you know of a system that does rely on the GPRS encryption for such data - please leave a note in the comments below.

In this Forbes interview with Karsten the interviewer tried to get an answer on why the encryption scheme for GPRS was made weaker than that of the earlier GSM voice encryption scheme (A5/1 - which demanded much more effort to crack).

One point I didn't see mentioned is that fact that data communicated over GPRS can easily be encrypted at the application level, while voice is generally only secured at the GSM level. No serious security engineer would rely on the unkown propriety GPRS encryption for securing sensitive data communications over GPRS when they can always add there own application level encryption. If you know of a system that does rely on the GPRS encryption for such data - please leave a note in the comments below.

Blackhat US 2011: Impressions

I attended my first BlackHat conference a couple of weeks ago in Las Vegas. It was an interesting experience and I thought I’d share some of my thoughts.

Tuesday, August 16, 2011

The RSA SecurID debacle: Why it happened

The RSA SecurID saga was one of the more interesting security stories of 2011. Analyzing the background of this story can give some insight as to how security decisions are taken and why security systems fail.

The seven laws of security engineering

There are a few laws in the field of security engineering that impact many aspects of the discipline. Most of these laws are self evident and well known, but the application of these laws to real world situations is difficult. In fact most security failures in the field can be traced to one or more of these laws.

Following is a list of seven such laws with a short description of each law. Future posts will elaborate on these laws (and others) as part of an analysis of specific cases.

You might ask a security engineer if a certain system is secure. If they give you an answer which sounds evasive and noncommittal that’s good – otherwise they’re not telling you the whole truth.

Because the truth is that no system is 100% secure in and of itself. The most a security engineer can say is that under certain assumptions the system is secure.

Subscribe to:

Posts (Atom)